Summary of concepts for AWS SysOps Administrator Certification.

CloudWatch

AWS CloudWatch Metrics

CloudWatch provides metrics for every services in AWS

Metricis a variable to monitor (CPUUtilization, NetworkIn…)- Metrics belong to

namespaces - Dimension is an attribute of a metric (instance id, environment, etc…).

- Up to 30 dimensions per metric

- Metrics have

timestamps - Can create CloudWatch dashboards of metrics

AWS Provided metrics (AWS pushes them):

BasicMonitoring (default): metrics are collected at a5 minuteinternalDetailedMonitoring (paid): metrics are collected at a1 minuteinterval- Includes

CPU, Network, Disk and Status Check Metrics

Custom metric (yours to push):

- Basic Resolution: 1 minute resolution

- High Resolution: all the way to 1 second resolution

- Include

RAM, application level metrics - Make sure the IAM permissions on the EC2 instance role are correct !

RAM is NOT included in the AWS EC2 metrics

CloudWatch Custom Metrics

You can retrieve custom metrics from your applications or services using the StatsD and collectd protocols. StatsD is supported on both Linux servers and servers running Windows Server. collectd is supported only on Linux

Possibility to define and send your own custom metrics to CloudWatch

Example: memory (RAM) usage, disk space, number of logged in users …

Use API call

PutMetricDataAbility to use dimensions (attributes) to segment metrics

- Instance.id

- Environment.name

Metric resolution (

StorageResolutionAPI parameter - two possible value):- Standard: 1 minute (60 seconds)

- High Resolution: 1/5/10/30 second(s) - Higher cost

Important

👀 EXAM:Accepts metric data points two weeks in the past and two hours in the future (make sure to configure your EC2 instance time correctly)You can

use AWS CLI or APItouploadthedata metricsto CloudWatch.

1 | aws cloudwatch put-metric-data --metric-name PageViewCount --namespace MyService --value 2 --timestamp 2023-01-01-14T08:00:00.000Z |

- high-resolution: high-resolution custom metric, your applications can publish metrics to CloudWatch with 1-second resolution. High-Resolution Alarms allow you to react and take actions faster and support the same actions available today with standard 1-minute alarms.

CloudWatch Dashboards

- Great way to setup custom dashboards for quick access to key metrics and alarms

Dashboards are global- ``Dashboards can include graphs from different AWS accounts and regions``** -

👀 EXAM - You can change the time zone & time range of the dashboards

- You can setup automatic refresh (10s, 1m, 2m, 5m, 15m)

- Dashboards can be shared with people who don’t have an AWS account (public, email address, 3rd party SSO provider through Amazon Cognito)

CloudWatch Logs - Sources

- SDK, CloudWatch Logs Agent, CloudWatch Unified Agent

- Elastic Beanstalk: collection of logs from application

- ECS: collection from containers

- AWS Lambda: collection from function logs

- VPC Flow Logs: VPC specific logs

- API Gateway

- CloudTrail based on filter

- Route53: Log DNS queries

CloudWatch Logs Subscriptions

Get a real-time log events from CloudWatch Logs for processing and analysis- Send to Kinesis Data Streams, Kinesis Data Firehose, or Lambda

Subscription Filter- filter which logs are events delivered to your destinationCross-Account Subscription- send log events to resources in a different AWS account (KDS, KDF)

Alarms

CloudWatch alarms allow you to monitor metrics and trigger actions based on defined thresholds. In this case, you can create a CloudWatch alarm that monitors the CPU utilization metric of the EC2 instance. When the CPU utilization reaches 100%, the alarm will be triggered, and you can configure actions such as sending notifications or executing automated actions to address the unresponsiveness issue.

Alarm Targets - 👀 EXAM

EC2- Stop, Terminate, Reboot, or Recover an EC2 InstanceEC2 Auto Scaling- Trigger Auto Scaling Action, scaling up or down.SNS- Send notification to SNS (from which you can do pretty much anything)creating a

Systems Manager OpsItem.Composite Alarms are monitoring the states of multiple other alarms

EC2 Instance Recovery

StatusCheckFailed_System

Status Check:

Instance status = check the EC2 VMSystem status = check the underlying hardware

Recovery: Same Private, Public, Elastic IP, metadata, placement group👀 Alarms can be created based on CloudWatch Logs Metrics FiltersTest an alarm using

aws set-alarm-state

1 | 👀 aws cloudwatch set-alarm-state --alarm-name "TerminateInHighCPU" --state-value ALARM --state-reason "testing purposes" |

CloudWatch Synthetics

CloudWatch Synthetics canaries are automated/configurable scripts that monitor the availability and performance of applications, endpoints, and APIs. They are designed to simulate user interactions with an application and provide insights into its behavior.

Canaries are created using scripts written in Node.js or Python and are scheduled to run at regular intervals. These scripts perform tasks such as navigating through a website, clicking on specific elements, submitting forms, and validating responses. By executing these predefined actions, canaries can monitor the functionality, responsiveness, and performance of an application or API.

CloudWatch Synthetics canaries collect data on metrics like response time, latency, availability, and success rates. They can also be configured to generate alarms when certain conditions are met, allowing proactive identification and remediation of issues.

Reference

https://docs.aws.amazon.com/awssupport/latest/user/trusted-advisor-check-reference.html

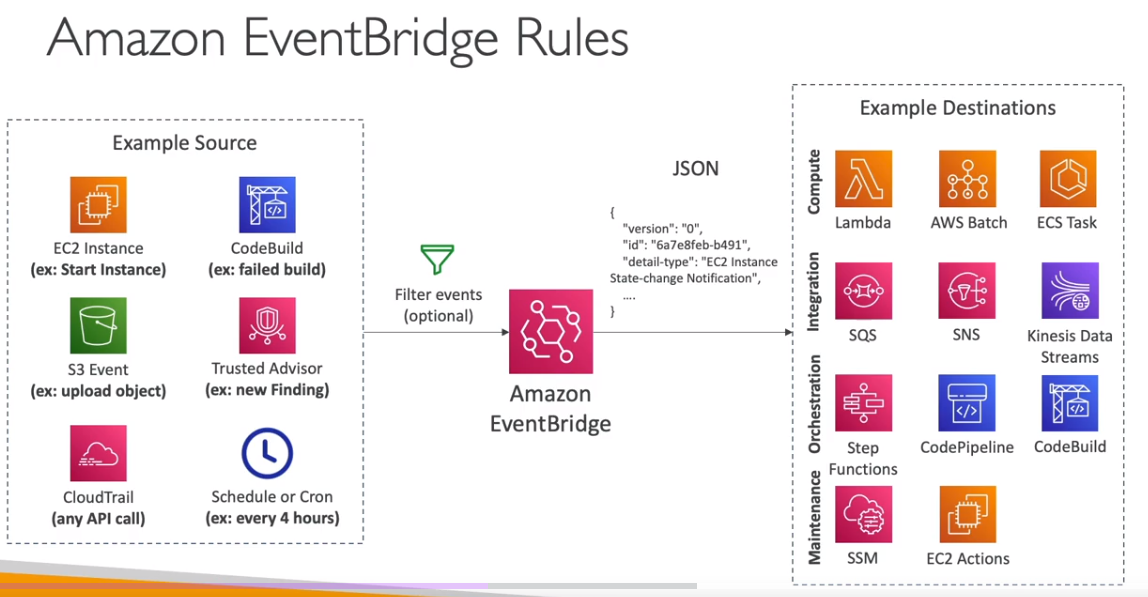

Amazon EventBridge (formerly CloudWatch Events)

- Schedule: Cron jobs (scheduled scripts) - Schedule Every hour -> Trigger script on Lambda function

- Event Pattern: Event rules to react to a service doing something - IAM Root User Sign in Event -> SNS Topic with Email Notification

- Trigger Lambda functions, send SQS/SNS messages…

Service Quotas CloudWatch Alarms

- Notify you when you’re close to a service quota value threshold

- Create CloudWatch Alarms on the Service Quotas console

- Example: Lambda concurrent executions

- Helps you know if you need to request a quota increase or shutdown resources before limit is reached

Alternative: Trusted Advisor CW Alarms

- Limited number of Service Limits checks in Trusted Advisor (~50)

- Trusted Advisor publishes its check results to CloudWatch

👀 For each production EC2 instance, create an Amazon CloudWatch alarm for Status Check Failed: System. Set the alarm action to recover the EC2 instance. Configure the alarm notification to be published to an Amazon Simple Notification Service (Amazon SNS) topic.

Explanation: By creating a CloudWatch alarm for Status Check Failed: System, you can automate the recovery task of EC2 instances (stop, terminate, reboot, or recover your Amazon EC2 instances). When the system health check fails for an EC2 instance, the alarm will be triggered and perform the configured action to recover the instance. This eliminates the need for manual intervention. Additionally, configuring the alarm to publish notifications to an SNS topic allows you to receive notifications whenever a system health check fails.

Status Check

Automated checks to identify hardware and software issues.

System Status Checks

- Monitors problems with AWS systems (software/hardware issues on the physical host, loss of system power, …)

- Check

Personal Health Dashboardfor any scheduled critical maintenance by AWS to your instance’s host - Resolution: stop and start the instance (instance migrated to a new host)

- Either wait for AWS to fix the host, OR

- Move the EC2 instance to a new host = STOP & START the instance (if EBS backed) Instance Status Checks

- Monitors software/network configuration of your instance (invalid network configuration, exhausted memory, …)

- Resolution: reboot the instance or change instance configuration.

Status Checks - CW Metrics & Recovery - 👀 EXAM

- CloudWatch Metrics (1 minute interval)

StatusCheckFailed_SystemStatusCheckFailed_InstanceStatusCheckFailed(for both)

- Option 1:

CloudWatch Alarm- Recover EC2 instance with the same private/public IP, EIP, metadata, and Placement Group

- Send notifications using SNS trigger

- Option 2:

Auto Scaling Group- Set min/max/desired 1 to recover an instance but

won't keep the same private and elastic IP.`

- Set min/max/desired 1 to recover an instance but

Determine which instance use the most bandwidth

NetworkIn and NetworkOut

Identify the processing power required

👀 CPUUtilization specifies the percentage of allocated EC2 compute units that are currently in use on the instance. This metric identifies the processing power required to run an application on a selected instance. This metric is expressed in Percent.

Number of users.

👀 ActiveConnectionCount This metric represents the total number of concurrent TCP connections active from clients to the load balancer and from the load balancer to targets.

RAMUtilization is NOT available as an EC2 metric

RAMUtilization You can publish your own metrics to CloudWatch using the AWS CLI or an API. You can view statistical graphs of your published metrics with the AWS Management Console. Metrics produced by AWS services are standard resolution by default.

5xx server errors

To monitor the number of 500 Internal Error responses that you’re getting, you can enable Amazon CloudWatch metrics. Amazon S3 CloudWatch request metrics include a metric for 5xx server errors.

4xxx

You can set an alarm to notify operators when the 404 filter metric exceeds a threshold.

👀 HTTPCode_ELB_4XX_Count metric stands for the number of HTTP 4XX client error codes that originate from the load balancer. This count does not include response codes generated by targets.

Events

You can run CloudWatch Events rules according to a schedule.

EBS Snapshots

It is possible to create an automated snapshot of an existing Amazon Elastic Block Store (Amazon EBS) volume on a schedule. You can choose a fixed rate to create a snapshot every few minutes or use a cron expression to specify that the snapshot is made at a specific time of day.

Snapshots are incremental backups, which means that only the blocks on the device that have changed after your most recent snapshot are saved. This minimizes the time required to create the snapshot and saves on storage costs by not duplicating data. Each snapshot contains all of the information that is needed to restore your data (from the moment when the snapshot was taken) to a new EBS volume.

Reference: Schedule Automated Amazon EBS Snapshots Using CloudWatch Events

Filters - QUESTION

You can create a count of 404 errors and exclude other 4xx errors with a filter pattern on 404 errors.

Agents

If your AMI contains a CloudWatch agent, it’s automatically installed on EC2 instances when you create an EC2 Auto Scaling group. With the stock Amazon Linux AMI, you need to install it (AWS recommends to install via yum).

Install Agents to track the state of each of the instances

You must attach the CloudWatchAgentServerRole IAM role to the EC2 instance to be able to run the CloudWatch agent on the instance. This role enables the CloudWatch agent to perform actions on the instance.

Publish custom metrics to CloudWatch.

You can publish your own metrics to CloudWatch using the AWS CLI or an API. You can view statistical graphs of your published metrics with the AWS Management Console. CloudWatch stores data about a metric as a series of data points. Each data point has an associated time stamp. You can even publish an aggregated set of data points called a statistic set.

The put-metric-data command publishes metric data to Amazon CloudWatch, which associates it with the specified metric. If the specified metric does not exist, CloudWatch creates the metric which can take up to fifteen minutes for the metric to appear in calls to ListMetrics.

Collect process metrics with the procstat plugin

The procstat plugin enables you to collect metrics from individual processes. It is supported on Linux servers and on servers running Windows Server 2012 or later.

Dashboard Body Structure and Syntax - EXAM

A DashboardBody is a string in JSON format. It can include an array of between 0 and 500 widget objects, as well as a few other parameters. The dashboard must include a widgets array, but that array can be empty.

When deploying resources using AWS CloudFormation, the goal is often to define as much of the desired infrastructure as possible directly within the template. This is achieved by taking the JSON representation of the prototype dashboard and embedding it directly within the CloudFormation template using the DashboardBody property.

1 | Resources: |

CloudTrail

Provides governance, compliance and audit for your AWS Account

- CloudTrail is

enabled by default! - Get

an history of events / API calls made within your AWS Accountby:- Console

- SDK

- CLI

- AWS Services

- Can put logs from CloudTrail into CloudWatch Logs or S3

A trail can be applied to All Regions (default) or a single Region- If a resource is deleted in AWS, investigate CloudTrail first!

CloudTrail Insights

- 👀 Enable

CloudTrail Insights to detect unusual activityin your account:- inaccurate resource provisioning

- hitting service limits

- Bursts of AWS IAM actions

- Gaps in periodic maintenance activity

- CloudTrail Insights analyzes normal management events to create a baseline

- And then

continuously analyzes write events to detect unusual patterns.- Anomalies appear in the CloudTrail console

- Event is sent to Amazon S3

- An EventBridge event is generated (for automation needs)

CloudTrail - Integration with EventBridge

- Used to react to any API call being made in your account

- CloudTrail is not “real-time”:

- Delivers an event within 15 minutes of an API call

- Delivers log files to an S3 bucket every 5 minutes

CloudTrail - Organizations Trails

- A trail that will log all events for all AWS accounts in an AWS Organization

- Log events for management and member accounts

- Trail with the same name will be created in every AWS account (IAM permissions)

- Member accounts can’t remove or modify the organization trail (view only)

CloudTrail - Log File Integrity Validation

Digest Files:

References the log files for the last hour and contains a hash of each

Stored in the same S3 bucket as log files (different folder)

Helps you determine whether a log file was modified/deleted after CloudTrail delivered itHashing using SHA-256, Digital Signing using SHA- 256 with RSĂProtect the S3 bucket using bucket policy, versioning, MFA Delete protection, encryption, object lockProtect files using IAM

Q. To ensure that SysOps administrators can easily verify that the CloudTrail log files have not been deleted or changed, the following action should be taken:

Enable

CloudTrail log file integrity validationwhen the trail is created or updated.Explanation: EnablingCloudTrail log file integrity validationensures that the log files are protected against tampering or unauthorized modification. CloudTrail uses SHA-256 hashes to validate the integrity of the log files stored in Amazon S3. By enabling this feature, the SysOps administrators can easily verify the integrity of the log files and ensure that they have not been deleted or changed

Cloud Trail - Integration with EventBridge AWS CloudTrail

- Used to react to any API call being made in your account

- Cloud Trail is not “real-time”:

- Delivers an event within 15 minutes of an API call

- Delivers log files to an S3 bucket every 5 minutes

CloudTrail - Organizations Trails

- A trail that will log all events for all AWS accounts in an AWS Organization

- Log events for management and member accounts

- Trail with the same name will be created in every AWS account (IAM permissions)

Member accounts can’t remove or modify the organization trail (view only)

👀 AWS Config

- Helps with

auditingand recordingcomplianceof your AWS resources. - Helps

record configurationsand changes over time. Questions that can be solved by AWS Config:- Is there unrestricted SSH access to my security groups?

- Do my buckets have any public access?

- How has my ALB configuration changed over time?

- You can

receive alerts(SNS notifications) for any changes - AWS Config is a

per-region service. - Can be aggregated across regions and accounts.

- Possibility of storing the configuration data into S3 (analyzed by Athena)

AWS Config keeps track of the configuration of your AWS resources and their relationships to other resources. It can also evaluate those AWS resources for compliance. This service uses rules that can be configured to evaluate AWS resources against desired configurations.

For example,

- can track

changestoCloudFormation stacks.

AWS Config can track changes to CloudFormation stacks. A CloudFormation stack is a collection of AWS resources that you can manage as a single unit. With AWS Config, you can review the historical configuration of your CloudFormation stacks and review all changes that occurred to them.

For more information about how AWS Config can track changes to CloudFormation deployments, see cloudformation-stack-drift-detection-check.

- there are AWS Config

rulesthatcheckwhether or not yourAmazon S3 buckets have logging enabledor yourIAM users have an MFA device enabled.

👀 AWS Config is a service that enables you to assess, audit, and evaluate the configurations of your AWS resources. It provides detailed inventory and configuration history of your resources, as well as configuration change notifications. With AWS Config, you can track the configuration of your S3 bucket, including its bucket policy.

AWS Config rules use AWS Lambda functions to perform the compliance evaluations, and the Lambda functions return the compliance status of the evaluated resources as compliant or noncompliant. The non-compliant resources are remediated using the remediation action associated with the AWS Config rule. With the Auto-Remediation feature of AWS Config rules, the remediation action can be executed automatically when a resource is found non-compliant.

AWS Config Auto Remediation feature has auto remediate feature for any non-compliant S3 buckets using the following AWS Config rules:

s3-bucket-logging-enabled s3-bucket-server-side-encryption-enabled s3-bucket-public-read-prohibited s3-bucket-public-write-prohibited

These AWS Config rules act as controls to prevent any non-compliant S3 activities.

Config Rules

AWS Config provides a number of AWS managed rules that address a wide range of security concerns such as checking if you encrypted your Amazon Elastic Block Store (Amazon EBS) volumes, tagged your resources appropriately, and enabled multi-factor authentication (MFA) for root accounts.

- Can use AWS

managedconfigrules(over 75) - Can make

custom config rules (must be defined in AWS Lambda).- Ex: evaluate if each EBS disk is of type gp2

- Ex: evaluate if each EC2 instance is t2.micro

- Rules can be evaluated / triggered:

- For each config change

- And / or: at regular time intervals

AWS Config Rules does not prevent actions from happening (no deny).

Managed rules:

require-tags: managed rule in AWS Config. This rule checks if a resource contains the tags that you specify.

Config Rules - Remediations

Has auto remediate feature for any non-compliant S3 buckets using the following AWS Config rules:

s3-bucket-logging-enabled s3-bucket-server-side-encryption-enabled s3-bucket-public-read-prohibited s3-bucket-public-write-prohibited

These AWS Config rules act as controls to prevent any non-compliant S3 activities.

Automate remediation of non-compliant resources using SSM Automation Documents.- Use AWS-Managed Automation Documents or create custom Automation Documents

Tip: you can create custom Automation Documents that invokes Lambda function.

- You can set

Remediation Retriesif the resource is still non-compliant after autoremediation.

AWS Config Auto Remediation

Config Rules - Notifications

- Use EventBridge to trigger notifications when AWS resources are noncompliant

- Ability to send configuration changes and compliance state notifications to SNS (all events - use SNS Filtering or filter at client-side)

👀 QUESTION- If there are EC2s that are terminated in an environment, you should use the

[EIP-attached Config rule](https://docs.aws.amazon.com/config/latest/developerguide/eip-attached.html)to find EIPs that are unattached in your environment.

AWS Config - Aggregators

- The aggregator is created

in one central aggregator account. - Aggregates

rules, resources, etc... across multiple accounts & regions. - If using

AWS Organizations, no need for individual Authorization - Rules are created in each individual source AWS account

- Can

deploy rulesto multiple target accounts usingCloudFormation StackSets

CloudWatch vs CloudTrail vs Config

- CloudWatch

- Performance monitoring (metrics, CPU, network, etc…) & dashboards

- Events & Alerting

- Log Aggregation & Analysis

- CloudTrail

- Record API calls made within your Account by everyone

- Can define trails for specific resources

- Global Service

- Config

- Record configuration changes

- Evaluate resources against compliance rules

- Get timeline of changes and compliance

AWS Task Orchestrator and Executor (AWSTOE) - 👀 EXAM

Use the AWS Task Orchestrator and Executor (AWSTOE) application to orchestrate complex workflows, modify system configurations, and test your systems without writing code. This application uses a declarative document schema. Because it is a standalone application, it does not require additional server setup.

`AWS Artifact - 👀 EXAM

AWS Artifact keeps compliance-related reports and agreements.

RDS

Advantage over using RDS versus deploying

- RDS is a managed service:

- Automated provisioning, OS patching

- Continuous backups and restore to specific timestamp (Point in Time Restore)!

- Monitoring dashboards

- Read replicas for improved read performance

- Multi AZ setup for DR (Disaster Recovery)

- Maintenance windows for upgrades

- Scaling capability (vertical and horizontal)

- Storage backed by EBS (gp2 or io1)

- BUT you can’t SSH into your instances

RDS Read Replicas for read scalability

- Up to 15 Read Replicas

- Within

AZ, Cross AZ or Cross Region. - Replication is

ASYNC. - Replicas can be promoted to their own DB.

RDS Read Replicas - Network Cost

- In AWS there’s a network cost when data goes from one AZ to another

For RDS Read Replicas within the same region, you don’t pay that fee.

RDS Multi AZ (Disaster Recovery)

SYNCreplication.- One DNS name - automatic app failover to standby

- Increase

availability. - Failover in case of loss of AZ, loss of network, instance or storage failure

👀 Exam - The Read Replicas be setup as Multi AZ for Disaster Recovery (DR).

Lambda in VPC

- You must define the VPC ID, the Subnets and the Security Groups

- Lambda will create an ENI (Elastic Network Interface) in your subnets

AWSLambdaVPCAccessExecutionRole

RDS Proxy for AWS Lambda

- When using Lambda functions with RDS, it opens and maintains a database connection

- This can result in a

“TooManyConnections”exception - With

RDS Proxy, you no longer need code that handles cleaning up idle connections and managing connection pools

DB Parameter Groups

You can configure the DB engine using Parameter Groups

Dynamic parameters are applied immediately

Static parameters are applied after instance reboot

You can modify parameter group associated with a DB (must reboot)

Must-know parameter:- PostgreSQL / SQL Server:

rds.force_ssl=1=> force SSL connections - MySQL / MariaDB:

require_secure_transport=1 => force SSL connections

- PostgreSQL / SQL Server:

RDS Events & Event Subscriptions

RDS keeps record of events related to:

DB instances

Snapshots

Parameter groups, security groups …

RDS Event Subscriptions

- Subscribe to events to be notified when an event occurs using SNS

- Specify the Event Source (instances, SGs, …) and the Event Category (creation, failover, …)

RDS delivers events to EventBridge

RDS with CloudWatch

CloudWatch metrics associated with RDS (gathered from the hypervisor):

DatabaseConnectionsSwapUsageReadIOPS / WriteIOPSReadLatency / WriteLatencyReadThroughPut / WriteThroughPutDiskQueueDepthFreeStorageSpace- To monitor the available storage space for an RDS DB instanceBinLogDiskUsage: Tracks the amount of disk space occupied by binary logs on the master.

FreeableMemory: Tracks the amount of available random access memory and not the available storage space.

DiskQueueDepth: Provides the number of outstanding IOs (read/write requests) waiting to access the disk.

Enhanced Monitoring(gathered from an agent on the DB instance).👀- Useful when you need to see how

different processes or threads use the CPU.

- Useful when you need to see how

Access to over 50 new CPU, memory, file system, and disk I/O metrics

Amazon RDS provides metrics in real time for the operating system (OS) that your DB instance runs on. You can view the metrics for your DB instance using the console. Also, you can consume the `Enhanced Monitoring`` JSON output from Amazon CloudWatch Logs in a monitoring system of your choice.

RDS storage autoscaling - 👀 EXAM

With RDS storage autoscaling, you can set the desired maximum storage limit. Autoscaling will manage the storage size. RDS storage autoscaling monitors actual storage consumption and then scales capacity automatically when actual utilization approaches the provisioned storage capacity.

Amazon Aurora DB

- Aurora is a proprietary technology from AWS (not open sourced)

- Postgres and MySQL are both supported as Aurora DB (that means your drivers will work as if Aurora was a Postgres or MySQL database)

- Aurora is “AWS cloud optimized” and claims 5x performance improvement over MySQL on RDS, over 3x the performance of Postgres on RDS

Aurora storage automatically grows in increments of 10GB, up to 128 TB.- Aurora can have up to 15 replicas and the replication process is faster than MySQL (sub 10 ms replica lag)

- Failover in Aurora is instantaneous. It’s HA (High Availability) native.

- Aurora costs more than RDS (20% more) - but is more efficient

Aurora High Availability and Read Scaling

One Aurora Instance takes writes (master)

Support for Cross Region ReplicationShared storage Volume: Replication + Self Healing + Auto expandingReader Endpoint Connection Load Balancing

RDS & Aurora Security

At-rest encryption:- Database master & replicas encryption using AWS KMS - must be defined as launch time

- If the master is not encrypted, the read replicas cannot be encrypted To encrypt an un-encrypted database, go through a DB snapshot & restore as encrypted

In-flight encryption: TLS-ready by default, use the AWS TLS root certificates client-sideIAM Authentication: IAM rolesto connect to your database (instead of username/pw)Security Groups: Control Network access to your RDS / Aurora DBNo SSH available except on RDS CustomAudit Logs can be enabled and sent to CloudWatch Logs for longer retention

Aurora for SysOps

You can associate a priority tier (0-15) on each Read Replica

- Controls the failover priority

- RDS will promote the Read Replica with the highest priority (lowest tier)

- If replicas have the same priority, RDS promotes the largest in size

- If replicas have the same priority and size, RDS promotes arbitrary replica

You can migrate an RDS MySQL snapshot to Aurora MySQL Cluster

Connect to Amazon Aurora DB cluster from outside a VPC

To connect to an Amazon Aurora DB cluster directly from outside the VPC, the instances in the cluster must meet the following requirements:

- The DB instance must have a public IP address.

- The DB instance must be running in a publicly accessible subnet.

For Amazon Aurora DB instances, you can’t choose a specific subnet. Instead, choose a DB subnet group when you create the instance. Create a DB subnet group with subnets of similar network configuration. For example, a DB subnet group for Public subnets.

Aurora Replicas - TODO

Aurora Replicas are independent endpoints in an Aurora DB cluster, best used for scaling read operations and increasing availability. Up to 15 Aurora Replicas can be distributed across the Availability Zones that a DB cluster spans within an AWS Region. The DB cluster volume is made up of multiple copies of the data for the DB cluster. However, the data in the cluster volume is represented as a single, logical volume to the primary instance and to Aurora Replicas in the DB cluster.

Alternatively, you can also use Amazon Aurora Multi-Master which is a feature of the Aurora MySQL-compatible edition that adds the ability to scale out write performance across multiple Availability Zones, allowing applications to direct read/write workloads to multiple instances in a database cluster and operate with higher availability.

Metrics to generate reports on the Aurora DB Cluster and its replicas

AuroraReplicaLagMaximum- This metric captures themaximum amount of lag between the primary instance and each Aurora DB instance in the DB cluster.AuroraBinlogReplicaLag- This metric captures theamount of time a replica DB cluster runningon Aurora MySQL-Compatible Edition lags behind the source DB cluster. This metric reports the value of the Seconds_Behind_Master field of the MySQL SHOW SLAVE STATUS command. This metric is useful for monitoring replica lag between Aurora DB clusters that are replicating across different AWS Regions.AuroraReplicaLag- This metric captures theamount of lagan Aurora replica experienceswhen replicating updates from the primary instance.InsertLatency- This metric capturesthe average duration of insert operations.

Aurora Reader Endpoint - 👀 EXAM

To perform queries, you can connect to the reader endpoint, with Aurora automatically performing load-balancing among all the Aurora Replicas.

A reader endpoint for an Aurora DB cluster provides load-balancing support for read-only connections to the DB cluster. Use the reader endpoint for read operations, such as queries. By processing those statements on the read-only Aurora Replicas, this endpoint reduces the overhead on the primary instance. It also helps the cluster to scale the capacity to handle simultaneous SELECT queries, proportional to the number of Aurora Replicas in the cluster. Each Aurora DB cluster has one reader endpoint.

Reference:Amazon Aurora connection management

Amazon ElastiCache Overview

The same way RDS is to get managed Relational Databases…

ElastiCache is to get managed

Redis or MemcachedCaches are in-memory databases with really high performance, low latency

Helps reduce load off of databases for read intensive workloadsHelps make your application statelessAWS takes care of OS maintenance / patching, optimizations, setup, configuration, monitoring, failure recovery and backups

Using ElastiCache involves heavy application code changes

ElastiCache Replication (Redis): Cluster Mode Disabled

- One primary node, up to 5 replicas

- Asynchronous Replication.

- Therefore, when a primary node fails over to a replica, a small amount of data might be lost due to replication lag.

- The primary node is used for read/write

- The other nodes are read-only

One shard, all nodes have all the data- Guard against data loss if node failure

- Multi-AZ enabled by default for failover

- Helpful to scale read performance

- Horizontal and vertical

ElastiCache Replication: Cluster Mode Enabled

Data is partitioned across shards (helpful to scale writes)

- Automatically increase/decrease the desired shards or replicas

- Supports both Target Tracking and Scheduled Scaling Policies

- Works only for Redis with Cluster Mode Enabled

Memcached

Fix high Memcached evictions

To fix the issue of high Memcached evictions in Amazon ElastiCache, the following actions should be taken:

Increasethesize of the nodesin the cluster: This allows for more available memory in each node, reducing the likelihood of evictions due to limited cache space.Increasethenumber of nodesin the cluster: By adding more nodes, the overall cache capacity increases, reducing the chance of evictions.

The Evictions metric for Amazon ElastiCache for Memcached represents the number of nonexpired items that the cache evicted to provide space for new items. If you are experiencing evictions with your cluster, it is usually a sign that you need to scale up (use a node that has a larger memory footprint) or scale out (add additional nodes to the cluster) to accommodate the additional data

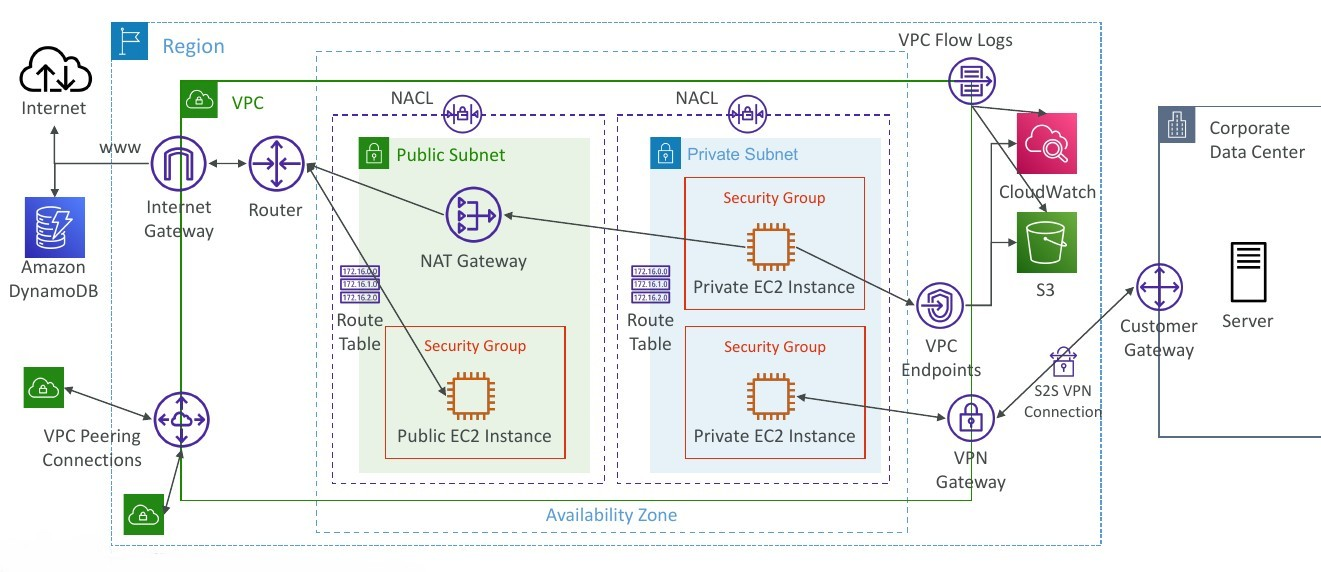

VPC

With Amazon Virtual Private Cloud (Amazon VPC), you can launch AWS resources in a logically isolated virtual network that you’ve defined. This virtual network closely resembles a traditional network that you’d operate in your own data center, with the benefits of using the scalable infrastructure of AWS.

Configuration

Regardless of the type of subnet, the internal IPv4 address range of the subnet is always private. AWS never announces these address blocks to the internet.

When you create a VPC, you must specify a range of IPv4 addresses for the VPC in the form of a Classless Inter-Domain Routing (CIDR) block; for example, 10.0.0.0/16. This is the primary CIDR block for your VPC.

Subnets created in a VPC can communicate with each other, this is default behaviour. The main route table facilitates this communication.

Reference: How Amazon VPC works

CIDR - IPv4

Classless

Inter-Domain Routing- a method for allocating IP addressesUsed in Security Groupsrules andAWS networkingin generalA CIDR consists of two components

Base IP

- Represents an IP contained in the range (XX.XX.XX.XX)

- Example: 10.0.0.0, 192.168.0.0, …

Subnet Mask

- Defines how many bits can change in the IP

- Example: /0, /24, /32

- Can take two forms:

- /8 ó 255.0.0.0

- /16 ó 255.255.0.0

- /24 ó 255.255.255.0

- /32 ó 255.255.255.255

Public vs. Private IP (IPv4)

The Internet Assigned Numbers Authority (IANA) established certain blocks of IPv4 addresses for the use of private (LAN) and public (Internet) addresses

Private IPcan only allow certain values:- 10.0.0.0 - 10.255.255.255 (10.0.0.0/8) <- in big networks

- 172.16.0.0 - 172.31.255.255 (172.16.0.0/12) <- AWS default VPC in that range

- 192.168.0.0 - 192.168.255.255 (192.168.0.0/16) <- e.g., home networks

All the rest of the IP addresses on the Internet are Public

VPC in AWS - IPv4

VPC = Virtual Private CloudYou can have multiple VPCs in an AWS region (max. 5 per region - soft limit)

- Max. CIDR per VPC is 5, for each CIDR:

- Min. size is /28 (16 IP addresses)

Max. size is /16 (65536 IP addresses)

Because VPC is private, only the Private IPv4 ranges are allowed:

- 10.0.0.0 - 10.255.255.255 (10.0.0.0/8)

- 172.16.0.0 - 172.31.255.255 (172.16.0.0/12)

- 192.168.0.0 - 192.168.255.255 (192.168.0.0/16)

Your VPC CIDR should NOT overlap with your other networks (e.g., corporate)

VPC - Subnet (IPv4)

- AWS reserves

5 IP addresses(first 4 & last 1) in each subnet - These 5 IP addresses are not available for use and can’t be assigned to anEC2 instance

- Example: if CIDR block 10.0.0.0/24, then reserved IP addresses are:

- 10.0.0.0 - Network Address

- 10.0.0.1 - reserved by AWS for the VPC router

- 10.0.0.2 - reserved by AWS for mapping to Amazon-provided DNS

- 10.0.0.3 - reserved by AWS for future use

- 10.0.0.255 - Network Broadcast Address. AWS does not support broadcast in a VPC, therefore the address is reserved

Exam Tip, if you need 29 IP addresses for EC2 instances:- You can’t choose a subnet of size /27 (32 IP addresses, 32 - 5 = 27 < 29)

- You need to choose a subnet of size /26 (64 IP addresses, 64 - 5 = 59 > 29)

Internet Gateway (IGW)

Allows resources (e.g., EC2 instances) in a VPC connect to the Internet

It scales horizontally and is highly available and redundant

Must be created separately from a VPC

One VPC can only be attached to one IGW and vice versa

Internet Gateways on their own do not allow Internet access…

Route tables must also be edited!

Bastion Hosts

Is an ec2 instance, it’s espcial because it’s in a public subnet, with its segurity group

- We can use a Bastion Host to SSH into our private EC2 instances

- The bastion is in the public subnet which is then connected to all other private subnets

Bastion Host security group must allowinbound from the internet on port 22 from restricted CIDR, for example the public CIDR of your corporationSecurity Group of the EC2 Instancesmust allow the Security Group of the Bastion Host, or the private IP of the Bastion host

NAT Instance (outdated, but still at the exam)

- NAT = Network Address Translation

- Allows EC2 instances in private subnets toconnect to the Internet

- Must be launched in a public subnet

- Must disable EC2 setting:

Source / destination Check - Must have Elastic IP attached to it

- Route Tables must be configured to route traffic from private subnets to the NAT instance

NAT Gateway

- AWS-managed NAT, higher bandwidth, high availability, no administration

- Pay per hour for usage and bandwidth

- NATGW is created in a specific Availability Zone, uses an Elastic IP

- Can’t be used by EC2 instance in the same subnet (only from other subnets)

- Requires an IGW (Private Subnet => NATGW => IGW)

- 5 Gbps of bandwidth with automatic scaling up to 45 Gbps

- No Security Groups to manage / required

NAT Gateway with High Availability

NAT Gateway is resilient within a single Availability Zone- Must create

multiple NAT Gatewaysinmultiple AZsfor fault-tolerance - There is no cross-AZ failover needed because if an AZ goes down it doesn’t need NAT

Connect the Lambda function to a private subnet that has a route to a NAT gateway deployed in a public subnet of the VPC.

Explanation: By connecting the Lambda function to a private subnet with a route to a NAT gateway, the function can access resources within the VPC while also leveraging the NAT gateway to access the internet and communicate with third-party APIs. The NAT gateway acts as a bridge between the private subnet and the internet, allowing the Lambda function to securely access external resources.

DNS Resolution in VPC

DNS Resolution (enableDnsSupport)- Decides if DNS resolution from Route 53 Resolver server is supported for the VPC

- True (default): it queries the Amazon Provider DNS Server at

169.254.169.253or the reserved IP address at the base of theVPC IPv4 network range plus two (.2).

enableDnsSupport - Indicates whether the DNS resolution is supported for the VPC. If this attribute is false, the Amazon-provided DNS server in the VPC that resolves public DNS hostnames to IP addresses is not enabled. If this attribute is true, queries to the Amazon provided DNS server at the 169.254.169.253 IP address, or the reserved IP address at the base of the VPC IPv4 network range plus two will succeed.

DNS Hostnames (enableDnsHostnames)- By default,

- True => default VPC

- False => newly created VPCs

- Won’t do anything unless enableDnsSupport=true

- If True, assigns public hostname to EC2 instance if it has a public IPv4

enableDnsHostnames - Indicates whether the instances launched in the VPC get public DNS hostnames. If this attribute is true, instances in the VPC get public DNS hostnames, but only if the enableDnsSupport attribute is also set to true.

By default, both attributes are set to true in a default VPC or a VPC created by the VPC wizard. By default, only the enableDnsSupport attribute is set to true in a VPC created on the Your VPCs page of the VPC console or using the AWS CLI, API, or an AWS SDK.

DNS Resolution in VPC

- If you use custom DNS domain names in a Private Hosted Zone in Route 53, you must set both these attributes (enableDnsSupport & enableDnsHostname) to true

Network Access Control List (NACL)

- NACL are like a firewall which control traffic from and to subnets

- One NACL per subnet, new subnets are assigned the Default NACL

- You define NACL Rules:

- Rules have a number (1-32766), higher precedence with a lower number

- First rule match will drive the decision

- Example: if you define #100 ALLOW 10.0.0.10/32 and #200 DENY 10.0.0.10/32, the IP address will be allowed because 100 has a higher precedence over 200

- The last rule is an asterisk (*) and denies a request in case of no rule match

- AWS recommends adding rules by increment of 100

- Newly created NACLs will deny everything

- NACL are a

great way of blocking a specific IP addressat the subnet level

Default NACL

Accepts everything inbound/outboundwith the subnets it’s associated with

Ephemeral Ports

- For any two endpoints to establish a connection, they must use ports

- Clients connect to a

defined port, and expect a response on anephemeral port - Different Operating Systems use different port ranges, examples:

- IANA & MS Windows 10 -> 49152 - 65535

- Many Linux Kernels -> 32768 - 60999

| Security Group | NACL |

|---|---|

| Operates at the instance level | Operates at the subnet level |

| Supports allow rules only | Supports allow rules and deny rules |

Stateful: return traffic is automatically allowed, regardless of any rules |

Stateless: return traffic must be explicitly allowed by rules (think of ephemeral ports) |

| All rules are evaluated before deciding whether to allow traffic | Rules are evaluated in order (lowest to highest) when deciding whether to allow traffic, first match wins |

| Applies to an EC2 instance when specified by someone | Automatically applies to all EC2 instances in the subnet that it’s associated with |

VPC - Reachability Analyzer

- A network diagnostics tool that troubleshoots network connectivity between two endpoints in your VPC(s)

- It builds a model of the network configuration, then checks the reachability based on these configurations (

it doesn’t send packets) - When the destination is

Reachable- it produces hop-by-hop details of the virtual network pathNot reachable- it identifies the blocking component(s) (e.g., configuration issues in SGs, NACLs, Route Tables, …)

- Use cases: troubleshoot connectivity issues, ensure network configuration is as intended, …

VPC Peering

Privately connect two VPCs using AWS’ network

Make them behave as if they were in the same network

Must not have overlapping CIDRs

VPC Peering connection is

NOT transitive(must be established for each VPC that need to communicate with one another)You must update route tables in each VPC’s subnets to ensure EC2 instances can communicate with each otherYou can create VPC Peering connection between VPCs in

different AWS accounts/regionsYou can reference a security group in a peered VPC (

works cross accounts - same region)

VPC Endpoint (AWS PrivateLink)

A VPC Endpoint allows you to connect your VPC directly to AWS services without the need for internet gateways, NAT gateways, or VPN connections. It enables private communication between your VPC and the AWS service without going over the internet.

- Most secure & scalable way to

expose a service to 1000s of VPC(own or other accounts) - Does `not require VPC peering, internet gateway, NAT, route tables… (magical..)

- Requires a

network load balancer(Service VPC) andENI(Customer VPC)or GWLB - If the NLB is in multiple AZ, and the ENIs in multiple AZ, the solution is fault tolerant!

To configure a VPC Endpoint for accessing AWS Systems Manager APIs, you can follow these steps:

- Create a VPC Endpoint for AWS Systems Manager in your Amazon VPC. This creates an elastic network interface with a private IP address within your VPC.

- Update the route tables in your VPC to route traffic destined for the AWS Systems Manager API endpoints to the VPC Endpoint. This ensures that traffic is directed through the VPC Endpoint instead of going over the internet.

- Verify that your on-premises instances and AWS managed instances are configured to use the appropriate VPC and route tables.

Types of Endpoints

Interface Endpoints (powered by PrivateLink)- Provisions an ENI (private IP address) as an entry point (must attach a Security Group)

- Supports most AWS services

- $ per hour + $ per GB of data processed

Gateway Endpoints- Provisions a gateway and must be used as

a target in a route table (does not use security groups) - Supports both S3 and DynamoDB

- Free

- Provisions a gateway and must be used as

Gateway or Interface Endpoint for S3?

Gateway is most likely going to be preferred all the time at the exam.- Cost: free for Gateway, $ for interface endpoint

- Interface Endpoint is preferred access is

requiredfromon premises(Site to Site VPN or Direct Connect), a different VPC or a different region.

VPC Flow Logs

- Capture information about IP traffic going into your interfaces:

- VPC Flow Logs

- Subnet Flow Logs

- Elastic Network Interface (ENI) Flow Logs

- Helps to monitor & troubleshoot connectivity issues

- Flow logs data can go to S3, CloudWatch Logs, and Kinesis Data Firehose

- Captures network information from AWS managed interfaces too: ELB, RDS, ElastiCache, Redshift, WorkSpaces, NATGW, Transit Gateway…

VPC Flow Logs Syntax

srcaddr & dstaddr- help identify problematic IPsrcport & dstport- help identity problematic portsAction- success or failure of the request due to Security Group / NACL- Can be used for analytics on usage patterns, or malicious behavior

Query VPC flow logs using Athena on S3 or CloudWatch Logs Insights- Flow Logs examples: https://docs.aws.amazon.com/vpc/latest/userguide/flow-logs-records-examples.html

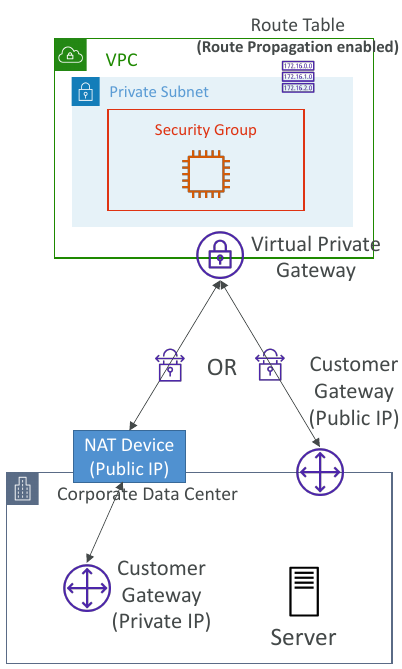

AWS Site-to-Site VPN

Virtual Private Gateway (VGW)- VPN concentrator on the AWS side of the VPN connection

- VGW is created and attached to the VPC from which you want to create the Site-to-Site VPN connection

- Possibility to customize the ASN (Autonomous System Number)

Customer Gateway (CGW)- Software application or physical device on customer side of the VPN connection

- https://docs.aws.amazon.com/vpn/latest/s2svpn/your-cgw.html#DevicesTested

Site-to-Site VPN Connections

Customer Gateway Device (On-premises)- 👀 What IP address to use?

- Public Internet-routable IP address for your Customer Gateway device

- If it’s behind a NAT device that’s enabled for NAT traversal (NAT-T), use the public IP address of the NAT device

- 👀 What IP address to use?

- 👀

Important step: enableRoute Propagationfor the Virtual Private Gateway in the route table that is associated with your subnets - 👀 If you need to

ping your EC2 instancesfrom on-premises, make sure you add theICMP protocol on the inbound of your security groups.

AWS VPN CloudHub

- Provide secure communication between multiple sites, if you have multiple VPN connections

Low-costhub-and-spoke model for primary or secondary network connectivity between different locations (VPN only)- It’s a VPN connection so it goes over the

public Internet - To set it up, connect multiple VPN connections on the same VGW, setup dynamic routing and configure route tables

To create a VPN

- Create Customer Gateway

- Create Virtual Private Gateway

- Use Site-to-Site VPN connection for both VGW and customers Gateway.

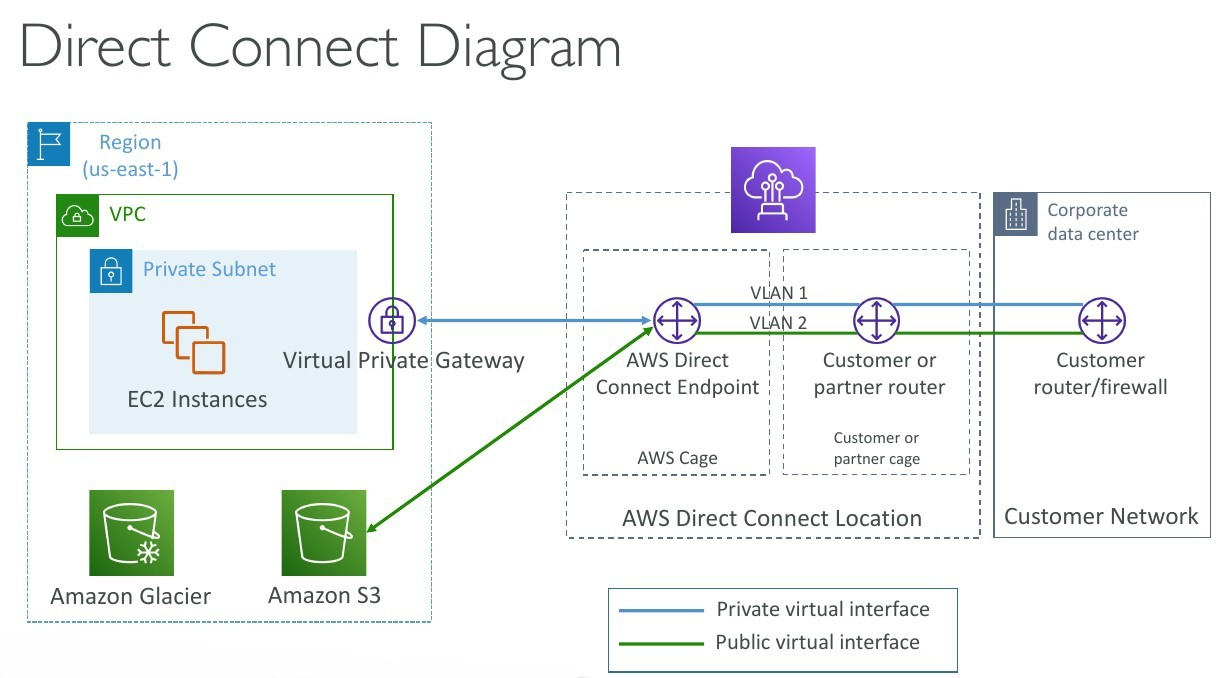

Direct Connect (DX)

- Provides a dedicated

privateconnection from aremote network to your VPC. - Dedicated connection must be setup between your DC and AWS Direct Connect locations

- You

needto setup aVirtual Private Gatewayon your VPC - Access public resources (S3) and private (EC2) on same connection

- Use Cases:

- Increase bandwidth throughput - working with large data sets - lower cost

- More consistent network experience - applications using real-time data feeds

- Hybrid Environments (on prem + cloud)

- Supports both IPv4 and IPv6

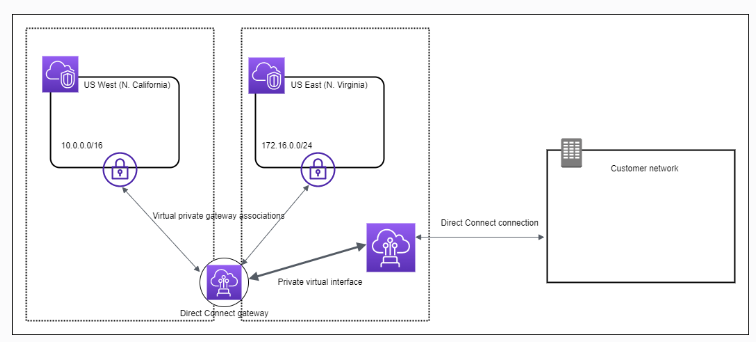

Direct Connect Gateway

If you want to setup a Direct Connect to one or more VPC in many different regions (same account), you must use a Direct Connect Gateway. - Exam 👀

Direct Connect - Connection Types

Dedicated Connections: 1Gbps,10 Gbps and 100 Gbps capacity- Physical ethernet port dedicated to a customer

- Request made to AWS first, then completed by AWS Direct Connect Partners

Hosted Connections: 50Mbps, 500 Mbps, to 10 Gbps- Connection requests are made via AWS Direct Connect Partners

- Capacity can be

added or removed on demand - 1, 2, 5, 10 Gbps available at select AWS Direct Connect Partners

- Lead times are often longer than 1

month to establish a new connection- 👀 EXAM

Direct Connect - Encryption

- Data in transit is

not encryptedbut is private AWS Direct Connect + VPN providesan IPsec-encrypted private connection

Direct Connect - Resiliency

High Resiliency for Critical Workloads

Maximum Resiliency for Critical Workloads

Site-to-Site VPN connection as a backup

In case Direct Connect fails, you can set up a backup Direct Connect connection (expensive), or a Site-to-Site VPN connection.

Transit Gateway

For having transitive peering between thousands of VPC and on-premises, hub-and-spoke (star) connectionRegional resource, can work cross-region- Share cross-account using Resource Access Manager (RAM)

- You can peer Transit Gateways across regions

- Route Tables: limit which VPC can talk with other VPC

- Works with Direct Connect Gateway, VPN connections

- Supports

IP Multicast(not supported by any other AWS service)1

Transit Gateway: Site-to-Site VPN ECMP

ECMP = Equal-cost multi-path routing.- Routing strategy to allow to forward a packet over multiple best path.

- Use case: create multiple Site-to-Site VPN connections

to increase the bandwidth of your connection to AWS.

VPC - Traffic Mirroring

- Capture the traffic

- From (Source) - ENIs

- To (Targets) - an ENI or a Network Load Balancer

- Source and Target can be in the same VPC or different VPCs (VPC Peering)

- Use cases: content inspection, threat Auto Scaling group monitoring, troubleshooting, …

IPv6 in VPC

IPv4 cannot be disabled for your VPC and subnets- You

can enable IPv6(they’re public IP addresses) to operate indual-stack mode. - Your EC2 instances

- They can communicate using either IPv4 or IPv6 to the internet through an Internet Gatewaywill get at least a private internal IPv4 and a public IPv6

IPv6 Troubleshooting

IPv4 cannot be disabled for your VPC and subnets- So, if you cannot launch an EC2 instance in your subnet

- It’s not because it cannot acquire an IPv6 (the space is very large)

- It’s because there are no available IPv4 in your subnet

Solution: create a new IPv4 CIDR in your subnet

Egress-only Internet Gateway

An egress-only internet gateway is a horizontally scaled, redundant, and highly available VPC component that allows outbound communication over IPv6 from instances in your VPC to the internet, and prevents the internet from initiating an IPv6 connection with your instances. You must update the Route Tables

An egress-only internet gateway is for use with IPv6 traffic only. To enable outbound-only internet communication over IPv4, use a NAT gateway instead.

Reference: Enable outbound IPv6 traffic using an egress-only internet gateway

Carrier gateway

A Carrier gateway is a highly available virtual appliance that provides outbound IPv6 internet connectivity for instances in your VPC. It acts as a gateway between your VPC and the internet, allowing IPv6 traffic to flow in and out of your VPC. By configuring a Carrier gateway, you can enable outbound communication over IPv6 for the EC2 instances in the private subnets while keeping them isolated from direct internet access.

Security Groups

By default, security groupsallow all outbound traffic.- Security group

rules are always permissive; you can’t create rules that deny access. - Security groups are

stateful

The reason for the issue where the new EC2 instances are unable to mount the Amazon EFS file system in a new Availability Zone could be:

The security group for the mount target does not allow inbound NFS connections from the security group used by the EC2 instances.

Explanation: When mounting an Amazon EFS file system from EC2 instances, the security group associated with the mount target should allow inbound NFS (Network File System) connections from the security group used by the EC2 instances. By default, the security group associated with the mount target allows inbound connections from the default security group of the VPC. If the EC2 instances are using a different security group, it needs to be added to the mount target’s security group’s inbound rules to allow NFS connections.

👀 - Only support allow rules. You have to allow incoming traffic from your customers to your instances

The following provides an overview of the steps to enable your VPC and subnets to use IPv6:

Step 1: Associate an IPv6 CIDR Block with Your VPC and Subnets - Associate an Amazon-provided IPv6 CIDR block with your VPC and with your subnets.Step 2: Update Your Route Tables - Update your route tables to route your IPv6 traffic. For a public subnet, create a route that routes all IPv6 traffic from the subnet to the Internet gateway. For a private subnet, create a route that routes all Internet-bound IPv6 traffic from the subnet to an egress-only Internet gateway.Step 3: Update Your Security Group Rules - Update your security group rules to include rules for IPv6 addresses. This enables IPv6 traffic to flow to and from your instances. If you’ve created custom network ACL rules to control the flow of traffic to and from your subnet, you must include rules for IPv6 traffic.Step 4: Change Your Instance Type - If your instance type does not support IPv6, change the instance type. If your instance type does not support IPv6, you must resize the instance to a supported instance type. In the example, the instance is an m3.large instance type, which does not support IPv6. You must resize the instance to a supported instance type, for example, m4.large.Step 5: Assign IPv6 Addresses to Your Instances - Assign IPv6 addresses to your instances from the IPv6 address range of your subnet.Step 6: (Optional) Configure IPv6 on Your Instances - If your instance was launched from an AMI that is not configured to use DHCPv6, you must manually configure your instance to recognize an IPv6 address assigned to the instance.

VPC Section Summary

CIDR- IP RangeVPC- Virtual Private Cloud => we define a list of IPv4 & IPv6 CIDRSubnets- tied to an AZ, we define a CIDRInternet Gateway- at the VPC level, provide IPv4 & IPv6 Internet AccessRoute Tables- must be edited to add routes from subnets to the IGW, VPC Peering Connections, VPC Endpoints, …Bastion Host- public EC2 instance to SSH into, that has SSH connectivity to EC2 instances in private subnetsNAT Instances- gives Internet access to EC2 instances in private subnets. Old, must be setup in a public subnet, disable Source / Destination check flagNAT Gateway- managed by AWS, provides scalable Internet access to private EC2 instances, IPv4 onlyPrivate DNS+ Route 53 - enable DNS Resolution + DNS Hostnames (VPC) -NACL- stateless, subnet rules for inbound and outbound, don’t forget Ephemeral PortsSecurity Groups- stateful, operate at the EC2 instance levelReachability Analyzer- perform network connectivity testing between AWS resourcesVPC Peering- connect two VPCs with non overlapping CIDR, non-transitiveVPC Endpoints- provide private access to AWS Services (S3, DynamoDB, CloudFormation, SSM) within a VPCVPC Flow Logs- can be setup at the VPC / Subnet / ENI Level, for ACCEPT and REJECT traffic, helps identifying attacks, analyze using Athena or CloudWatch Logs InsightsSite-to-Site VPN- setup a Customer Gateway on DC, a Virtual Private Gateway on VPC, and site-to-site VPN over public InternetAWS VPN CloudHub- hub-and-spoke VPN model to connect your sitesDirect Connect- setup a Virtual Private Gateway on VPC, and establish a direct private connection to an AWS Direct Connect LocationDirect Connect Gateway- setup a Direct Connect to many VPCs in different AWS regionsAWS PrivateLink / VPC Endpoint Services:- Connect services privately from your service VPC to customers VPC

- Doesn’t need VPC Peering, public Internet, NAT Gateway, Route Tables

- Must be used with Network Load Balancer & ENI

ClassicLink- connect EC2-Classic EC2 instances privately to your VPCTransit Gateway- transitive peering connections for VPC, VPN & DXTraffic Mirroring- copy network traffic from ENIs for further analysisEgress-only Internet Gateway- like a NAT Gateway, but for IPv6

Networking Costs in AWS per GB

- Use Private IP instead of Public IP for good savings and better network performance

- Use same AZ for maximum savings (at the cost of high availability) - Exam 👀

S3 Data Transfer Pricing - Analysis for USA

S3 ingress: freeS3 to Internet: $0.09 per GBS3 Transfer Acceleration:- Faster transfer times (50 to 500% better)

- Additional cost on top of Data Transfer Pricing: +$0.04 to $0.08 per GB

S3 to CloudFront: $0.00 per GBCloudFront to Internet: $0.085 per GB (slightly cheaper than S3)- Caching capability (lower latency)

- Reduce costs associated with S3 Requests Pricing (7x cheaper with CloudFront)

S3 Cross Region Replication: $0.02 per GB

AWS Network Firewall - 👀 OJO QUESTION

Protect your entire Amazon VPC- Pass traffic through only from known AWS service domains or IP address endpoints, such as Amazon S3.

- Use custom

lists of known bad domains to limit the types of domain names that your applications can access. - Perform

deep packet inspectionDPI on traffic entering or leaving your VPC. - Use stateful protocol detection to filter protocols like HTTPS, independent of the port used.

- From Layer 3 to Layer 7 protection

- Any direction, you can inspect

VPCtoVPCtrafficOutboundto internetInboundfrom internetTo / from Direct Connect & Site-to-Site VPN

- Internally, the AWS Network Firewall uses the AWS Gateway Load Balancer 👀

- Rules can be centrally managed

cross-accountbyAWS Firewall Managerto apply to many VPCs

Network Firewall - Fine Grained Controls

Supports 1000s of rules

IP & port - example: 10,000s of IPs filtering

Protocol - example: block the SMB protocol for outbound communications

Stateful domain list rule groups: only allow outbound traffic to *.mycorp.com or third-party software repo

General pattern matching using regex

Traffic filtering: Allow, drop, or alert for the traffic that matches the rulesActive flow inspectionto protect against network threats with intrusion-prevention capabilities (like Gateway Load Balancer, but all managed by AWS)Send logs of rule matches to Amazon S3, CloudWatch Logs, Kinesis Data Firehose

CloudFormation

cfn-init

- AWS::CloudFormation::Init must be in the Metadata of a resource

- With the cfn-init script, it helps make complex EC2 configurations readable

- The EC2 instance will query the CloudFormation service to get init data

- Logs go to /var/log/cfn-init.log

(More readable compared with user data scripts)

1 | UserData: |

1 | Metadata: |

cfn-signal & wait conditions

We still don’t know how to tell CloudFormation that the EC2 instance got properly configured after a

cfn-initFor this, we can use the

cfn-signalscript!- We run cfn-signal right after cfn-init

- Tell CloudFormation service to keep on going or fail

We need to define

WaitCondition:- Block the template until it receives a signal from cfn-signal

- We attach a

CreationPolicy(also works on EC2, ASG)- The creation policy is

invoked onlywhen AWS CloudFormation creates the associated resource. Currently, the only AWS CloudFormation resources that support creation policies areAWS::AutoScaling::AutoScalingGroup, AWS::EC2::Instance, and AWS::CloudFormation::WaitCondition.

- The creation policy is

1 | # Start cfn-signal to the wait condition |

1 | SampleWaitCondition: |

CloudFormation StackSets

- Create, update, or delete stacks across multiple accounts and regions with a single operation

- Administrator account to create StackSets

- Trusted accounts to create, update, delete stack instances from StackSets

Use AWS CloudFormation StackSets for Multiple Accounts in an AWS Organization:

Use AWS CloudFormation StackSets to deploy a template to each account to create the new IAM roles.

Explanation: AWS CloudFormation StackSets allows you to deploy a CloudFormation template across multiple AWS accounts. By using StackSets, you can create and manage the same IAM roles in each account within the organization. This ensures consistent deployment of roles across accounts and simplifies the management process.

Reference: New: Use AWS CloudFormation StackSets for Multiple Accounts in an AWS Organization

QUESTION To lunch the last AMI.

Use the Parameters section in the template to specify the Systems Manager (SSM) Parameter, which contains the latest version of the Windows regional AMI ID.

1 | Parameters: |

UpdatePolicy attribute - 👀 EXAM

By adding the UpdatePolicy attribute in CloudFormation and enabling the WaitOnResourceSignals property, the Auto Scaling group update process will be handled more gracefully. This approach allows CloudFormation to monitor the health and success of each instance during the update process before moving on to the next instance.

Appending a health check at the end of the user data script allows the instance to signal CloudFormation that it has successfully completed its initialization. This helps ensure that the instance is fully operational before proceeding to the next instance in the Auto Scaling group update process.

1 | CreationPolicy: |

👀 DependsOn attribute

With the DependsOn attribute, you can specify that the creation of a specific resource follows another. When you add a DependsOn attribute to a resource, that resource is created only after the creation of the resource specified in the DependsOn attribute.

Set Tags consistently in AWS across all accounts

Use the CloudFormation Resource Tags property to apply tags to certain resource types upon creation.

1 | Resources: |

Output and Export

1 | Outputs: |

1 | Resources: |

No conditions in parameters

1 | Parameters: |

!GetAtt- The Fn::GetAtt intrinsic function returns the value of an attribute from a resource in the template. This example snippet returns a string containing the DNS name of the load balancer with the logical name myELB - YML : !GetAtt myELB.DNSName JSON : “Fn::GetAtt” : [ “myELB” , “DNSName” ]!Sub- The intrinsic function Fn::Sub substitutes variables in an input string with values that you specify. In your templates, you can use this function to construct commands or outputs that include values that aren’t available until you create or update a stack.!Ref- The intrinsic function Ref returns the value of the specified parameter or resource.!FindInMap- The intrinsic function Fn::FindInMap returns the value corresponding to keys in a two-level map that is declared in the Mappings section. For example, you can use this in the Mappings section that contains a single map, RegionMap, that associates AMIs with AWS regions.

AWS Backup

- Fully managed service

Centrally manage and automate backups across AWS services.- No need to create custom scripts and manual processes

- Supported services:

- Amazon

EC2 / Amazon EBS - Amazon

S3 - Amazon

RDS (all DBs engines) / Amazon Aurora / Amazon DynamoDB - Amazon

DocumentDB / Amazon Neptune - Amazon

EFS / Amazon FSx (Lustre & Windows File Server)

- Amazon

- AWS Storage Gateway (Volume Gateway)

- Supports cross-region backups

- Supports cross-account backups

- On-Demand and Scheduled backups

- Tag-based backup policies

- You create backup policies known as

Backup Plans- Backup frequency (every 12 hours, daily, weekly, monthly, cron expression)

- Backup window

- Transition to Cold Storage (Never, Days, Weeks, Months, Years)

- Retention Period (Always, Days, Weeks, Months, Year

👀 QUESTIONUESTION

AWS Backup is a fully managed and cost-effective backup service that simplifies and automates data backup across AWS services including Amazon EBS, Amazon EC2, Amazon RDS, Amazon Aurora, Amazon DynamoDB, Amazon EFS, and AWS Storage Gateway. In addition, AWS Backup leverages AWS Organizations to implement and maintain a central view of backup policy across resources in a multi-account AWS environment. Customers simply tag and associate their AWS resources with backup policies managed by AWS Backup for Cross-Region data replication.

👀 QUESTIONUESTION For the production account, a SysOps administrator must ensure that all data is backed up daily for all current and future Amazon EC2 instances and Amazon Elastic File System (Amazon EFS) file systems. Backups must be retained for 30 days

Create a backup plan in AWS Backup. Assign resources by resource ID, selecting all existing EC2 and EFS resources that are running in the account. Edit the backup plan daily to include any new resources. Schedule the backup plan to run every day with a lifecycle policy to expire backups after 30 days.

Explanation: AWS Backup provides a centralized and automated solution for backing up data. By creating a backup plan and assigning resources by resource ID, you can easily include all existing EC2 instances and EFS file systems in the backup process. Editing the backup plan daily ensures that any new resources are automatically included in the backups. By scheduling the backup plan to run every day and configuring a lifecycle policy to expire backups after 30 days, you meet the requirement of daily backups with a retention period of 30 days.

AWS Backup does not reboot EC2 instances - 👀 QUESTIONUESTION

AWS Backup does not reboot EC2 instances at any time. To maintain the file integrity of images created, you have to apply the reboot parameter when taking images.

To create a Lambda function that calls the CreateImage API with a reboot parameter and then schedule the function to run on a daily basis via Amazon EventBridge (Amazon CloudWatch Events).

AWS Backup Vault Lock

- Enforce a WORM (Write On1ce Read Many) state for all the backups that you store in your AWS Backup Vault

- Additional layer of defense to protect your backups against:

- Inadvertent or malicious delete operations

- Updates that shorten or alter retention periods

- Even the root user cannot delete backups when enabled

AWS Shared Responsibility Model

- AWS responsibility - Security

ofthe Cloud- Protecting infrastructure (hardware, software, facilities, and networking) that runs all the AWS services

- Managed services like S3, DynamoDB, RDS, etc.

- Customer responsibility - Security

inthe Cloud- For EC2 instance, customer is responsible for management of the guest OS (including security patches and updates), firewall & network configuration, IAM

- Encrypting application data

- Shared controls:

- Patch Management, Configuration Management, Awareness & Training

DDoS (Distributed Denial-of-service) Protection on AWS

AWS Shield Standard: protects against DDoS attack for your website and applications, for all customers at no additional costs.AWS Shield Advanced: 24/7 premium DDoS protection.AWS WAF: Filter specific requests based on rules.CloudFront and Route 53:- Availability protection using global edge network

- Combined with AWS Shield, provides attack mitigation at the edge

- Be ready to scale - leverage

AWS Auto Scaling.

AWS WAF - Web Application Firewall

Protects your web applications from common web exploits (Layer 7)

Layer 7 is HTTP(vs Layer 4 is TCP)Deploy on

Application Load Balancer, API Gateway, CloudFrontDefine Web ACL (Web Access Control List):

- Rules can include:

IP addresses, HTTP headers, HTTP body, or URI strings - Protects from common attack -

SQL injectionandCross-Site Scripting (XSS). - Size constraints,

geo-match (block countries) Rate-based rules(to count occurrences of events) -for DDoS protection

- Rules can include:

Penetration Testing on AWS Cloud

- AWS customers are welcome to carry out security assessments or penetration tests against their AWS infrastructure

without prior approval for 8 services:- Amazon EC2 instances, NAT Gateways, and Elastic Load Balancers

- Amazon RDS

- Amazon CloudFront

- Amazon Aurora

- Amazon API Gateways

- AWS Lambda and Lambda Edge functions

- Amazon Lightsail resources

- Amazon Elastic Beanstalk environments

Penetration Testing on your AWS Cloud

Prohibited Activities- DNS zone walking via Amazon Route 53 Hosted Zones

- Denial of Service (DoS), Distributed Denial of Service (DDoS), Simulated DoS, Simulated DDoS

- Port flooding

- Protocol flooding

- Request flooding (login request flooding, API request flooding)

Read more: https://aws.amazon.com/security/penetration-testing/

Amazon Inspector: Securiry Compliance for EC2 and Sofware deployed on AWS.

AWS Inspector - 👀 EXAM

- Amazon Inspector is

used for security compliance of instances and applications deployed on AWS. - Amazon Inspector

checksforunintended network accessibilityof your Amazon EC2 instances andvulnerabilitieson those EC2 instances. - Amazon Inspector also

integrateswithAWS Security Hubto provide aviewof yoursecurity posture across multiple AWS accounts.

Amazon Inspector is an automated security assessment service that helps you test the network accessibility of your Amazon EC2 instances and the security state of your applications running on the instances.

An Amazon Inspector assessment report can be generated for an assessment run once it has been successfully completed. An assessment report is a document that details what is tested in the assessment run, and the results of the assessment. The results of your assessment are formatted into a standard report, which can be generated to share results within your team for remediation actions, to enrich compliance audit data, or to store for future reference.

You can select from two types of report for your assessment, a findings report or a full report. The findings report contains an executive summary of the assessment, the instances targeted, the rules packages tested, the rules that generated findings, and detailed information about each of these rules along with the list of instances that failed the check. The full report contains all the information in the findings report and additionally provides the list of rules that were checked and passed on all instances in the assessment target.

👀 Amazon Inspector

Automated Security Assessments- For

EC2 instances- Leveraging the AWS System Manager (SSM) agent

- Analyze against unintended network accessibility

- Analyze the running OS against known vulnerabilities

- For Container Images push to

Amazon ECR- Assessment of Container Images as they are pushed Amazon ECR

- For

Lambda Functions- Identifies software vulnerabilities in function code and package ependencies

- Assessment of functions as they are deployed

-> Reporting & integration with AWS Security Hub

To provide a view of your security posture across multiple AWS accounts.

-> Send findings to Amazon Event Bridge

AWS Security Hub - TODO

Supports automated security checks aligned to the Center for Internet Security’s (CIS) AWS Foundations Benchmark version 1.4.0 requirements for Level 1 and 2 (CIS v1.4.0).

CIS AWS Foundations Benchmark - TODO

Serves as a set of security configuration best practices for AWS. These industry-accepted best practices provide you with clear, step-by-step implementation and assessment procedures. Ranging from operating systems to cloud services and network devices, the controls in this benchmark help you protect the specific systems that your organization uses.

https://docs.aws.amazon.com/securityhub/latest/userguide/cis-aws-foundations-benchmark.html https://aws.amazon.com/about-aws/whats-new/2022/11/security-hub-center-internet-securitys-cis-foundations-benchmark-version-1-4-0/

What does Amazon Inspector evaluate?

Only for EC2 instances, Container Images & Lambda functions-EXAM- Continuous scanning of the infrastructure, only when needed

- Package vulnerabilities (EC2, ECR & Lambda) - database of CVE

- Network reachability (EC2)

- A risk score is associated with all vulnerabilities for prioritization

Amazon Inspector discovers potential security issues by using security rules to analyze AWS resources. Amazon Inspector also integrates with AWS Security Hub to provide a view of your security posture across multiple AWS accounts.

Amazon GuardDuty - 👀 EXAM

Intelligent Threat discovery to protect your AWS Account.- Uses Machine Learning algorithms, anomaly detection, 3rd party data

- One click to enable (30 days trial), no need to install software

- Input data includes:

CloudTrail Events Logs- unusual API calls, unauthorized deploymentsCloudTrail Management Events- create VPC subnet, create trail, …CloudTrail S3 Data Events- get object, list objects, delete object, …

VPC Flow Logs- unusual internal traffic, unusual IP addressDNS Logs- compromised EC2 instances sending encoded data within DNS queriesOptional Feature- EKS Audit Logs, RDS & Aurora, EBS, Lambda, S3 Data Events…

- Can setup

EventBridge rulesto be notified in case of findings - EventBridge rules can target AWS Lambda or SNS

Can protect against CryptoCurrency attacks (has a dedicated “finding” for it)- 👀 EXAM

AWS Macie

- Amazon Macie is a fully managed data security and data privacy service that uses

machine learning and pattern matching to discover andprotect your sensitive data in AWS. - Macie helps identify and alert you to

sensitive data, such as personally identifiable information (PII). - Notify to Amazon EventBridge => Integrations

QUESTION AWS Trusted Advisor

Trusted Advisor provides real-time guidance to help users follow AWS best practices to provision their resources. Hight level account assessment.

E.g. AWS Trusted Advisor checks for service usage that is more than 80% of the service limit.

Check categories - 👀 EXAM

Cost optimizationPerformanceSecurityFault toleranceService limits

Trusted Advisor - Support Plans - Exam

7 CORES CHECKS for Basic & Developer Support plan

- S3 Bucket Permissions

- Security Groups - Specific Ports Unrestricted

- IAM Use (one IAM user minimum)

- MFA on Root Account

- EBS Public Snapshots

- RDS Public Snapshots

- Service Limits

FULL CHECKS

- Full Checks available on the 5 categories

- Ability to set CloudWatch alarms when reaching limits

Programmatic Access using AWS Support API- Exa,

AWS KMS (Key Management Service)

- Anytime you hear “encryption” for an AWS service, it’s most likely KMS

- AWS manages encryption keys for us

- Fully integrated with IAM for authorization

- Easy way to control access to your data

Able to audit KMS Key usage using CloudTrail- Exam- Seamlessly integrated into most AWS services (EBS, S3, RDS, SSM…)

- Never ever store your secrets in plaintext, especially in your code!

- KMS Key Encryption also available through API calls (SDK, CLI)

- Encrypted secrets can be stored in the code / environment variables

KMS Automatic Key Rotation

For Customer-managed CMK(not AWS managed CMK)- If enabled: automatic key rotation happens

every 1 year. - Previous key is kept active so you can decrypt old data

- New Key has the same CMK ID (only the backing key is changed)

KMS Manual Key Rotation

When you want to rotate key every 90 days, 180 days, etc...- New Key has a different CMK ID

- Keep the previous key active so you can decrypt old data

- Better to use aliases in this case (to hide the change of key for the application)

- Good solution to rotate CMK that are not eligible for automatic rotation (

like asymmetric CMK)